Do people talking about AI understand exponential growth?

Most speculation I see about what might happen with artificial intelligence anticipates some stable situation where humans and AI reach an equilibrium.

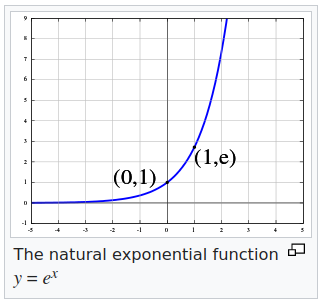

Do people not understand exponential growth? Do they not understand that AI drives the development of AI? Even if you don’t know differential equations or calculus, which predicts exponential growth, you have to see that that situation means that the faster AI develops, the faster AI develops more AI.

In other words, any equilibrium will last less time than the one before. By the time AI creates a robot with human intelligence and physical ability, ten minutes later it will be able to create one with double each. If not exactly ten minutes later, well short of any time for us to adjust.

It will also increase its pollution and depletion exponentially, something people seem to look at as an unfortunate side effect that can be solved. I doubt it. I have a strong feeling the effects of AI’s pollution and depletion will dominate its effect on humanity and Earth.

Read my weekly newsletter

On initiative, leadership, the environment, and burpees